Research

Security News

Lazarus Strikes npm Again with New Wave of Malicious Packages

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

@aws-cdk/aws-s3

Advanced tools

Define an unencrypted S3 bucket.

new Bucket(this, 'MyFirstBucket');

Bucket constructs expose the following deploy-time attributes:

bucketArn - the ARN of the bucket (i.e. arn:aws:s3:::bucket_name)bucketName - the name of the bucket (i.e. bucket_name)bucketUrl - the URL of the bucket (i.e.

https://s3.us-west-1.amazonaws.com/onlybucket)arnForObjects(...pattern) - the ARN of an object or objects within the

bucket (i.e.

arn:aws:s3:::my_corporate_bucket/exampleobject.png or

arn:aws:s3:::my_corporate_bucket/Development/*)urlForObject(key) - the URL of an object within the bucket (i.e.

https://s3.cn-north-1.amazonaws.com.cn/china-bucket/mykey)Define a KMS-encrypted bucket:

const bucket = new Bucket(this, 'MyUnencryptedBucket', {

encryption: BucketEncryption.Kms

});

// you can access the encryption key:

assert(bucket.encryptionKey instanceof kms.EncryptionKey);

You can also supply your own key:

const myKmsKey = new kms.EncryptionKey(this, 'MyKey');

const bucket = new Bucket(this, 'MyEncryptedBucket', {

encryption: BucketEncryption.Kms,

encryptionKey: myKmsKey

});

assert(bucket.encryptionKey === myKmsKey);

Use BucketEncryption.ManagedKms to use the S3 master KMS key:

const bucket = new Bucket(this, 'Buck', {

encryption: BucketEncryption.ManagedKms

});

assert(bucket.encryptionKey == null);

By default, a bucket policy will be automatically created for the bucket upon the first call to addToPolicy(statement):

const bucket = new Bucket(this, 'MyBucket');

bucket.addToPolicy(statement);

// we now have a policy!

You can bring you own policy as well:

const policy = new BucketPolicy(this, 'MyBucketPolicy');

const bucket = new Bucket(this, 'MyBucket', { policy });

This package also defines an Action that allows you to use a Bucket as a source in CodePipeline:

import codepipeline = require('@aws-cdk/aws-codepipeline');

import s3 = require('@aws-cdk/aws-s3');

const sourceBucket = new s3.Bucket(this, 'MyBucket', {

versioned: true, // a Bucket used as a source in CodePipeline must be versioned

});

const pipeline = new codepipeline.Pipeline(this, 'MyPipeline');

const sourceStage = new codepipeline.Stage(this, 'Source', {

pipeline,

});

const sourceAction = new s3.PipelineSource(this, 'S3Source', {

stage: sourceStage,

bucket: sourceBucket,

bucketKey: 'path/to/file.zip',

artifactName: 'SourceOuptut', //name can be arbitrary

});

// use sourceAction.artifact as the inputArtifact to later Actions...

You can also add the Bucket to the Pipeline directly:

// equivalent to the code above:

const sourceAction = sourceBucket.addToPipeline(sourceStage, 'CodeCommit', {

bucketKey: 'path/to/file.zip',

artifactName: 'SourceOutput',

});

You can create a Bucket construct that represents an external/existing/unowned bucket by using the Bucket.import factory method.

This method accepts an object that adheres to BucketRef which basically include tokens to bucket's attributes.

This means that you can define a BucketRef using token literals:

const bucket = Bucket.import(this, {

bucketArn: new BucketArn('arn:aws:s3:::my-bucket')

});

// now you can just call methods on the bucket

bucket.grantReadWrite(user);

The bucket.export() method can be used to "export" the bucket from the current stack. It returns a BucketRef object that can later be used in a call to Bucket.import in another stack.

Here's an example.

Let's define a stack with an S3 bucket and export it using bucket.export().

class Producer extends Stack {

public readonly myBucketRef: BucketRef;

constructor(parent: App, name: string) {

super(parent, name);

const bucket = new Bucket(this, 'MyBucket');

this.myBucketRef = bucket.export();

}

}

Now let's define a stack that requires a BucketRef as an input and uses

Bucket.import to create a Bucket object that represents this external

bucket. Grant a user principal created within this consuming stack read/write

permissions to this bucket and contents.

interface ConsumerProps {

public userBucketRef: BucketRef;

}

class Consumer extends Stack {

constructor(parent: App, name: string, props: ConsumerProps) {

super(parent, name);

const user = new User(this, 'MyUser');

const userBucket = Bucket.import(this, props.userBucketRef);

userBucket.grantReadWrite(user);

}

}

Now, let's define our CDK app to bind these together:

const app = new App(process.argv);

const producer = new Producer(app, 'produce');

new Consumer(app, 'consume', {

userBucketRef: producer.myBucketRef

});

process.stdout.write(app.run());

The Amazon S3 notification feature enables you to receive notifications when certain events happen in your bucket as described under S3 Bucket Notifications of the S3 Developer Guide.

To subscribe for bucket notifications, use the bucket.onEvent method. The

bucket.onObjectCreated and bucket.onObjectRemoved can also be used for these

common use cases.

The following example will subscribe an SNS topic to be notified of all ``s3:ObjectCreated:*` events:

const myTopic = new sns.Topic(this, 'MyTopic');

bucket.onEvent(s3.EventType.ObjectCreated, myTopic);

This call will also ensure that the topic policy can accept notifications for this specific bucket.

The following destinations are currently supported:

sns.Topicsqs.Queuelambda.FunctionIt is also possible to specify S3 object key filters when subscribing. The

following example will notify myQueue when objects prefixed with foo/ and

have the .jpg suffix are removed from the bucket.

bucket.onEvent(s3.EventType.ObjectRemoved, myQueue, { prefix: 'foo/', suffix: '.jpg' });

0.9.2 (2018-09-20)

NOTICE: This release includes a framework-wide breaking change which changes the type of all the string resource attributes across the framework. Instead of using strong-types that extend cdk.Token (such as QueueArn, TopicName, etc), we now represent all these attributes as normal strings, and codify the tokens into the string (using the feature introduced in #168).

Furthermore, the cdk.Arn type has been removed. In order to format/parse ARNs, use the static methods on cdk.ArnUtils.

See motivation and discussion in #695.

addPartitionKey and addSortKey methods to be consistent across the board. (#720) (e6cc189)policy argument (#730) (a79190c), closes #672FAQs

The CDK Construct Library for AWS::S3

The npm package @aws-cdk/aws-s3 receives a total of 91,188 weekly downloads. As such, @aws-cdk/aws-s3 popularity was classified as popular.

We found that @aws-cdk/aws-s3 demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

Security News

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

Security News

Socket CEO Feross Aboukhadijeh discusses the open web, open source security, and how Socket tackles software supply chain attacks on The Pair Program podcast.

Security News

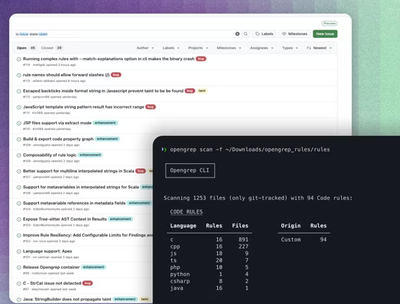

Opengrep continues building momentum with the alpha release of its Playground tool, demonstrating the project's rapid evolution just two months after its initial launch.